The Macro: The Chat Interface Has a Ceiling

Somewhere around the fifteenth time I asked an AI assistant for a comparison table and got back seven paragraphs of prose instead, I started to feel the friction. Not frustration exactly. More like: this is obviously wrong and everyone knows it and nobody has fixed it yet.

That’s the gap OpenUI is stepping into.

The developer tooling side of AI is genuinely moving fast right now. Open source AI infrastructure in particular is having a moment. The open source services market was valued at around $46 billion in 2025 and multiple research firms project it crossing $200 billion by 2034, according to Research and Markets. That number is big enough to be almost meaningless, but the underlying signal is real: developers are choosing open, composable tooling over locked-in SaaS stacks wherever they can.

The specific problem of “how should AI apps present information” has mostly been solved by default: text. Markdown if you’re lucky. That default exists not because it’s good but because it’s easy. Every model outputs tokens, tokens are characters, characters become text. Building structured UI on top of that requires extra work, and most teams skip it.

The tools that do tackle this tend to be proprietary. Google has been building generative UI primitives quietly inside its own product surface. There are scattered component libraries and prompt-to-code tools (I’ve looked at a few, most are demos masquerading as infrastructure). Cline’s approach to living directly in the development pipeline is adjacent thinking, just aimed at a different layer of the stack. Nobody has really planted a flag on “here is the open standard for how AI should render UI” and tried to make it stick.

That’s the claim OpenUI is making. It’s an ambitious one.

The Micro: A Protocol for AI That Renders, Not Just Writes

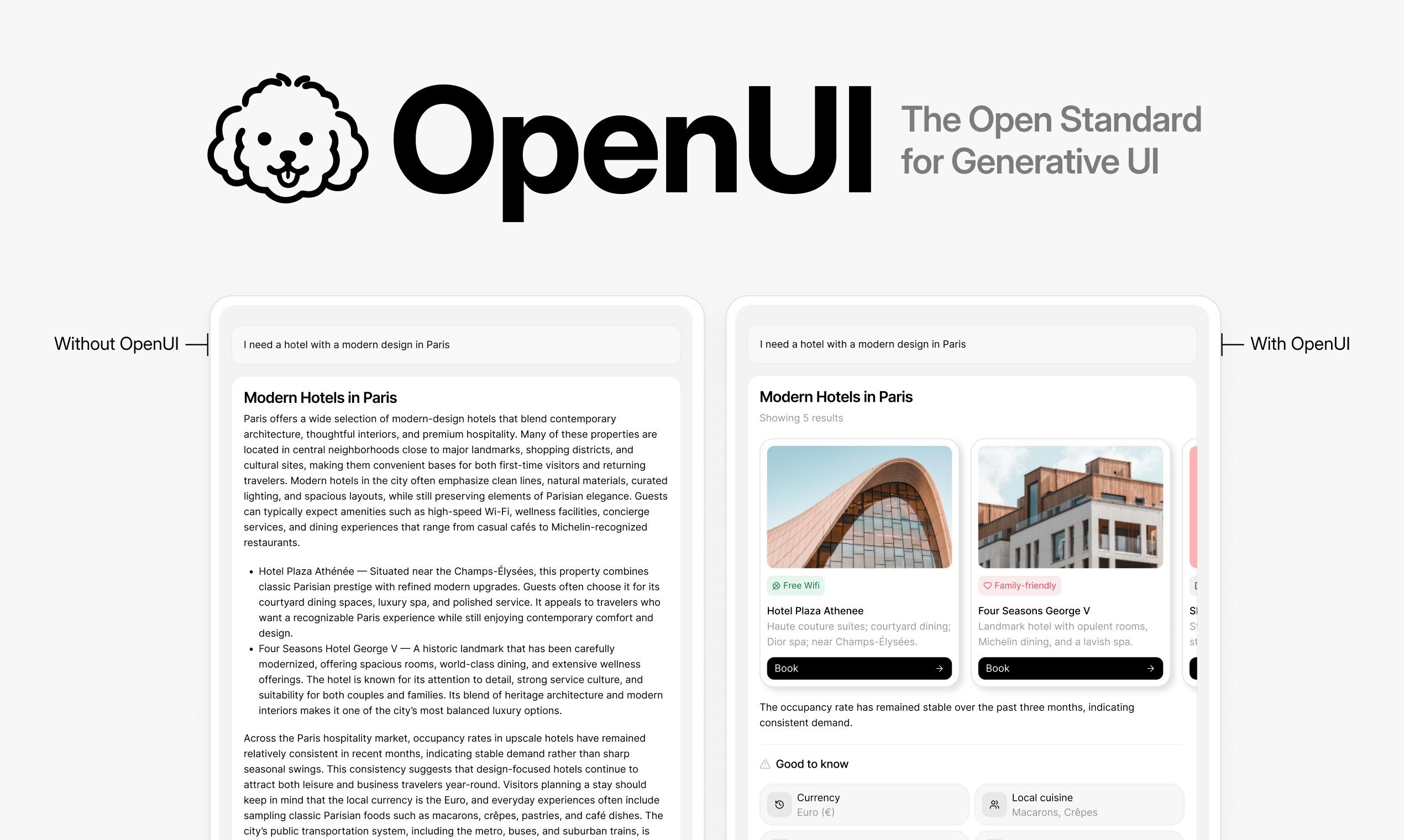

The pitch is clean: instead of your AI app returning a text blob, it returns structured UI components. Cards, tables, forms, charts. Things users can actually interact with.

OpenUI describes itself as “streaming-native and token-efficient,” which matters more than it sounds. Streaming means the UI renders progressively as tokens arrive, so you’re not waiting for a full response before anything appears on screen. Token-efficient means the protocol isn’t bloating your context window with a bunch of scaffolding overhead just to describe a button. Both of these are real engineering constraints that get ignored in a lot of “AI UI” conversations.

It works with GPT, Claude, and Gemini (listed as M2.5 in their materials, which I assume refers to Gemini 2.5). It also claims compatibility with agent frameworks like Vercel’s ai-sdk and Google’s ADK. That’s a meaningful list. If you’re building on any of those, you don’t have to swap out your model layer to use this.

The setup is a single CLI command: npx @openuidev/cli@latest create. That’s a good sign. Projects that bury their getting-started flow behind ten steps of configuration are usually not actually ready for developers yet.

The playground on their site demos a hotel search query that returns an actual card layout instead of a paragraph. It’s a small thing, but it makes the point immediately. You feel the difference.

It got solid traction on launch day and the GitHub repo is public, which means you can go read the actual spec rather than trust marketing copy.

One thing I’d want to dig into: how opinionated is the component schema? “Open standard” only means something if other tools adopt it. Right now it reads more like a well-designed library than a ratified protocol. That gap matters a lot for whether this becomes infrastructure or just another option.

The Verdict

I think the core observation here is correct. Text-only AI responses are a UX regression and most teams are too deep in the LLM weeds to notice. OpenUI is at least asking the right question.

The open source framing is smart. The way Superset positioned itself as coordination infrastructure for AI coding agents shows there’s appetite for open, developer-owned tooling at the AI layer right now. OpenUI is trying to do something similar one level up, at the presentation layer.

What makes or breaks this at 30 days is adoption breadth. One or two popular frameworks adding native support would change the entire story. Without that, it stays a compelling library rather than a standard.

At 60 days, I’d want to see the component schema documented publicly and versioned. Standards live and die by their specs being stable enough to build against.

At 90 days, the question is whether teams outside of demo projects are actually shipping with it. That’s the only data point that matters.

I’m rooting for it. Not because open source AI UI is a category I have strong feelings about, but because “AI app returns a wall of text” is a problem I experience personally and constantly, and somebody needs to fix it.