The Macro: Nobody Wants to Own the Docs

Here’s a thing that happens constantly in software teams: a developer ships a feature, the code review gets five thoughtful comments, and the documentation update gets merged with a ‘lgtm’ after three seconds of eyeballing. We are extremely good at reviewing code. We have built entire disciplines around it. The docs? Those are someone else’s problem until they aren’t.

The software development tools market sits at around $6.41 billion in 2025, according to Mordor Intelligence, and it’s growing steadily. A lot of that money is flowing into AI coding assistants and code generation. Not much of it is going toward making sure the output of all that AI-generated code gets documented accurately. That’s a gap that looks small until a new engineer spends two days debugging something because the example in the README was written for a version that shipped eighteen months ago.

Technical writing tooling is genuinely fragmented. You’ve got the old guard, platforms like Confluence and Notion that are more about organizing information than reviewing its quality. You’ve got newer AI writing tools that help you generate documentation faster. But the problem isn’t usually the speed of writing. The problem is that documentation rots. Code changes, context shifts, and the docs stay frozen at whatever they were when someone last had time to care.

Tools like Superset’s approach to managing AI coding agents are trying to bring structure to the chaos on the generation side. OpenUI is pushing for better AI interfaces that connect behavior to visible output. The pattern here is clear: the AI coding boom has created a lot of new surface area for things to go wrong, and tooling is scrambling to catch up.

Documentation review specifically is a weird niche to try to own. But weird niches with real pain are usually where the interesting bets are.

The Micro: A GitHub Bot That Actually Reads the Docs

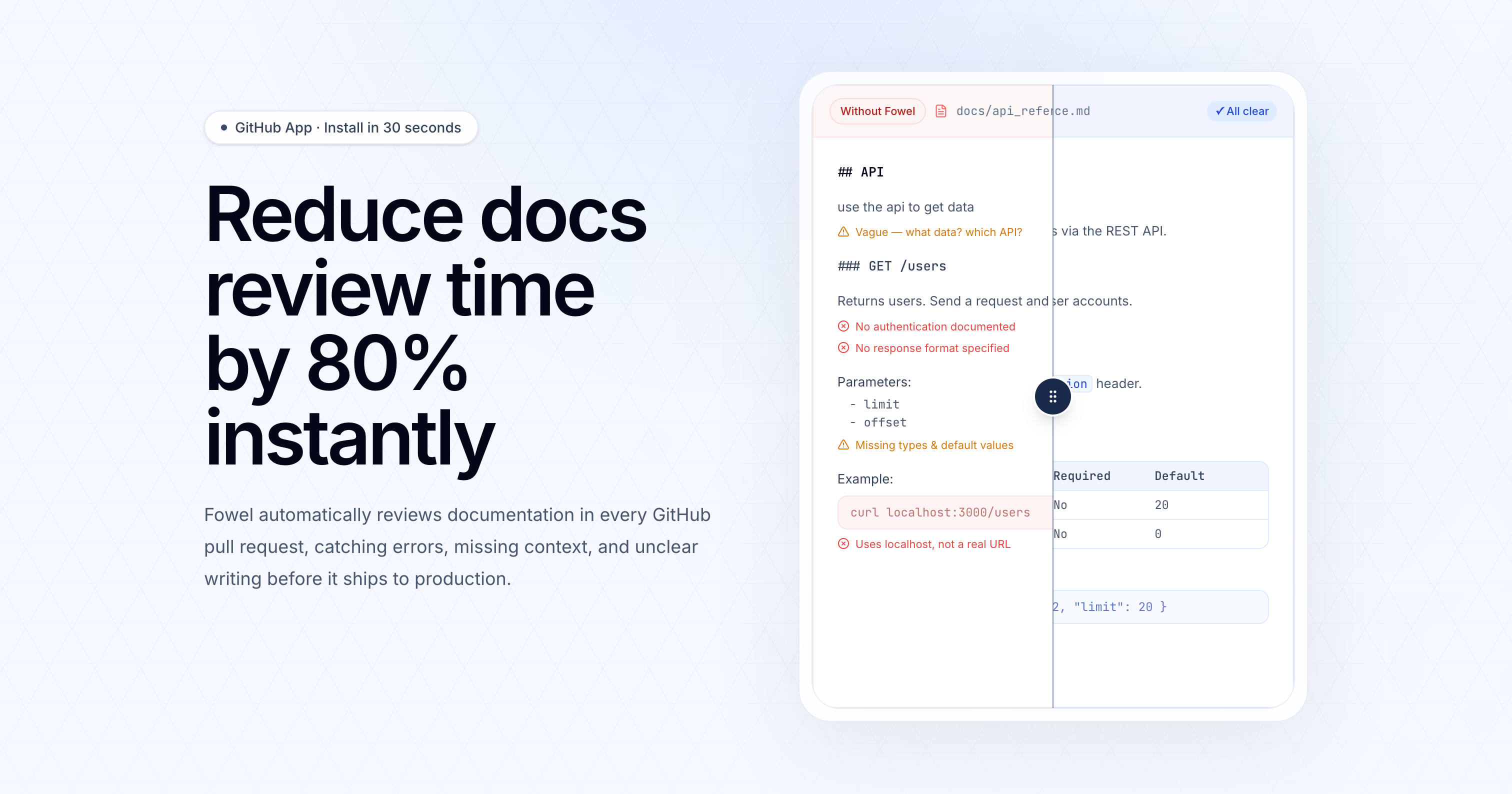

Fowel is a GitHub integration that reviews your documentation as part of your existing pull request workflow. Not a separate platform, not a Notion plugin, not a writing assistant you have to open in a new tab. It sits inside the PR review itself and flags issues before anything merges.

The specific things it says it catches are worth reading carefully: inaccuracies, missing context, outdated code samples, and structural gaps. That’s a meaningful list. Outdated code samples alone are probably responsible for a staggering amount of developer frustration. If Fowel can reliably catch those, that’s a concrete win. The inaccuracies claim is harder to evaluate without knowing how it sources ground truth, which is the question I’d be asking in a demo.

The install story is aggressive. Thirty seconds, unlimited repositories. That’s a real product decision. They’re not asking you to migrate anything or onboard your team through a multi-step process. You install it, it starts reviewing. That kind of zero-friction entry point matters enormously for developer tools because the alternative is a proof-of-concept that never makes it past one engineer’s personal projects.

The 80% reduction in documentation review time claim in the tagline is doing a lot of work. I have no way to verify that number from available information, and I’d want to see the methodology before repeating it to anyone with a straight face. That said, the directional logic isn’t absurd. If reviewers are currently spending any time at all manually reading through doc changes and asking basic questions, automating that first pass buys back real time.

It got solid traction on launch day and currently sits at number eight for the daily ranking, which suggests it’s landing with the developer tools crowd at least.

The tool is squarely aimed at teams that already have a docs-in-GitHub workflow. If your documentation lives outside of version control, Fowel probably doesn’t touch it. That’s a real constraint on the addressable market, though also arguably a feature. The problem of keeping AI tools grounded in what’s actually in the codebase is one Claudebin has been wrestling with from a different angle, and it’s the same underlying tension here.

The Verdict

I find this one legitimately interesting, with one big caveat.

The positioning is sharp. The install path is right. Putting the review inside the PR is the correct architectural choice because that’s where developers already are and where change control already happens. If Fowel can be accurate enough that reviewers start trusting its flags, it becomes part of the muscle memory of shipping. That’s a good place to be.

The caveat is accuracy. Documentation review is a comprehension problem, not just a formatting problem. A tool that flags missing context reliably is impressive. A tool that hallucinates problems or misses obvious ones gets disabled by one frustrated senior engineer on a Friday afternoon and never turns back on. Thirty days in, that’s the number I want to see: false positive rate. Not how many issues it caught, how many of the flags were actually worth acting on.

At sixty days, I want to know if teams are expanding usage across repos or quietly scoping it down to low-stakes projects.

Hackmamba has been building in the developer education and documentation space for a while. This feels like a bet that the problem they’ve been adjacent to has finally become acute enough that people will pay to solve it automatically. That bet is not obviously wrong.