The Macro: Why Blockchain Developer Tooling Is Still Embarrassingly Immature

Here is a thing that is true and underappreciated: most blockchain development environments are a mess. Not philosophically, not at the architecture level. Just practically, at the desk, on a Tuesday, when you are trying to test something locally and your test validator does not behave like mainnet and you spend four hours figuring out why.

Solana specifically has a reputation for performance that its developer tooling does not always match. The network is fast. The local simulation story is not.

This matters more than it sounds. The gap between what a developer can simulate locally and what actually runs on mainnet is where bugs live. It is where audits miss things. It is where the gap between “it worked in testing” and “it failed on chain” quietly costs people money. Any tool that genuinely closes that gap earns serious attention from the people building there.

The broader open source services market is growing fast, with multiple research firms placing it somewhere between $35 billion and $85 billion by the mid-2030s depending on how you define the category. Developer tooling for Web3 sits inside that, in a noisier and less mature corner of it. The tools that win in immature markets are usually the ones that solve a specific, painful, daily problem rather than the ones with the biggest vision statement. I have watched enough of these cycles to find the “infrastructure as code” angle more interesting than another wallet SDK.

Cline has been doing interesting work on what it looks like when developer tooling actually lives inside your workflow, and the pattern holds here. The closer a tool gets to where the real work happens, the stickier it becomes. Surfpool is betting that “where the real work happens” is local simulation.

The Micro: Drop-In Replacement, Drop-In Ambitions

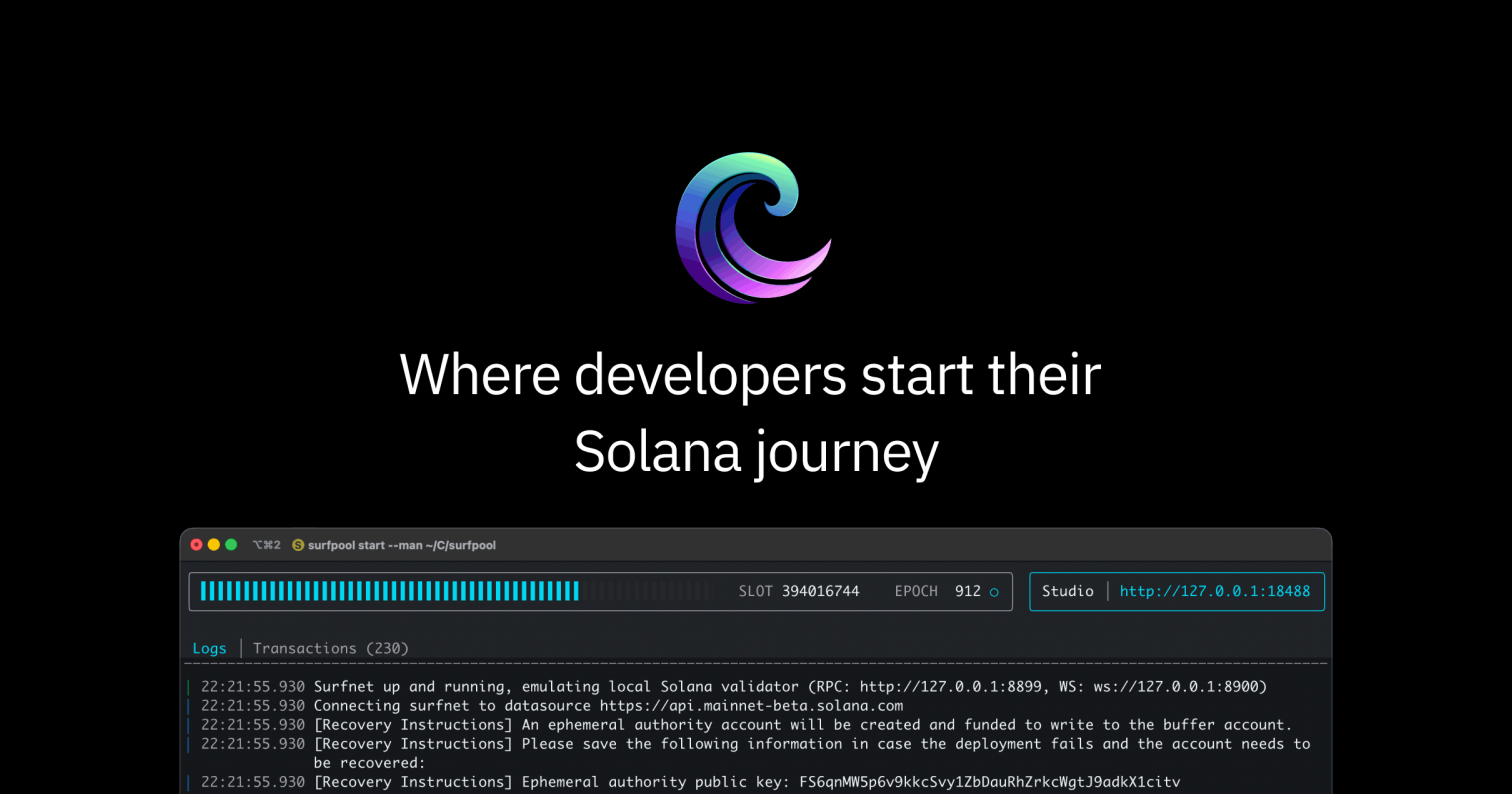

Surfpool describes itself as a drop-in replacement for solana-test-validator, which is the official local testing tool for Solana development. Drop-in replacement is a specific and meaningful claim. It means you do not have to rewrite your workflow. You swap one binary for another and, in theory, things just work better.

The core value proposition is this: Surfpool lets you simulate Solana programs locally using real mainnet accounts. That is the thing that solana-test-validator does not do cleanly. When you test locally with the default tooling, you are testing against a simulated environment that may not reflect the actual state of the chain. Mainnet has accounts with real balances, real program states, real token configurations. Surfpool pulls that context in, so what you test locally is much closer to what will run on mainnet.

That is a genuinely useful problem to solve.

It also ships with Infrastructure as Code deployment support, which means teams can define their Solana deployment configuration the same way they would define any other cloud infrastructure. Repeatable, version-controlled, reviewable. This is standard practice in traditional software development. It is less standard in Web3, which means someone offering it here is either early or right on time depending on how you read the maturity curve.

The project is open source, lives on GitHub, and got solid traction on launch day.

I want to flag that the product website was not available when I looked at it, which limits how much I can say about implementation details, pricing structure, or team background. What I have is the product description and the feature list, and that is what I am working from. For the kind of developer who would evaluate this, the GitHub repo will tell them more than any marketing page anyway. Tools like this live or die on documentation and community, and I would be checking both before committing.

For context on what it looks like when open source dev tools actually build a following, the Hacker News macOS client story is a useful reference point. Small tools, right audience, real traction.

The Verdict

I think this is a real problem being addressed with a sensible approach. The mainnet account simulation angle is specific enough to be credible, and the Infrastructure as Code framing suggests the team understands how professional development teams actually work, not just how solo hackers prototype.

What I do not know is how complete the implementation is. “Drop-in replacement” is a promise that breaks in interesting ways at the edges. Does it handle all program types? How does it handle account staleness? What is the latency on pulling mainnet state? These are the questions a Solana developer would ask in the first ten minutes of evaluation, and I cannot answer them from what is available.

At 30 days, the signal I would want is whether developers who tried it actually kept using it. Adoption in tooling is easy. Retention is the real number.

At 90 days, the question is community. Does the GitHub repo have active issues? Are people contributing? Is the documentation getting better?

Superset’s approach to agent coordination is instructive here: the tools that grow in developer markets are the ones that make the community feel like collaborators, not users. If Surfpool treats its GitHub as a real feedback channel rather than a support ticket inbox, it has a legitimate shot at becoming the default starting point it claims to want to be.