The Macro: The Dirty Secret Hiding Inside Every Fine-Tuned Model

Everybody wants a domain-expert AI. Nobody wants to talk about what it takes to build one.

The dirty secret is that most of the work isn’t the model architecture or even the compute. It’s the data. Specifically, it’s the several months of labeling, cleaning, validating, and praying that your training set actually reflects the real world and not some intern’s best guess from six months ago. This is the bottleneck that makes enterprise AI projects run eighteen months over schedule.

The AI developer tools market was valued at around $4.5 billion and is projected to hit $10 billion by 2030, according to Virtue Market Research. That’s a lot of money flowing into tooling. But most of it goes toward inference, orchestration, and deployment. The messy upstream problem of actually constructing good training data gets surprisingly little love from the VC-backed tooling crowd.

Where it does get attention, you mostly see two camps. First, synthetic data generators, which are useful but carry a real risk of laundering hallucinations directly into your training pipeline if you’re not careful. Second, human labeling services, which are slow and expensive by definition. Scale AI sits in this space. So does Labelbox. Both are legitimate businesses, but neither is especially friendly to the developer who just wants to spin up a domain-specific classifier over a weekend.

Python keeps eating the world, by the way. The 2025 Stack Overflow Developer Survey showed a seven-percentage-point jump in Python adoption from the previous year. That matters because it tells you who the actual buyers of a Python-native data tooling SDK are, and there are more of them every quarter.

The question was always whether someone could build a cleaner on-ramp between messy real-world documents and production-ready labeled datasets. Lightning Rod is trying to answer that.

The Micro: A Few Lines of Python, One Less Labeling Sprint

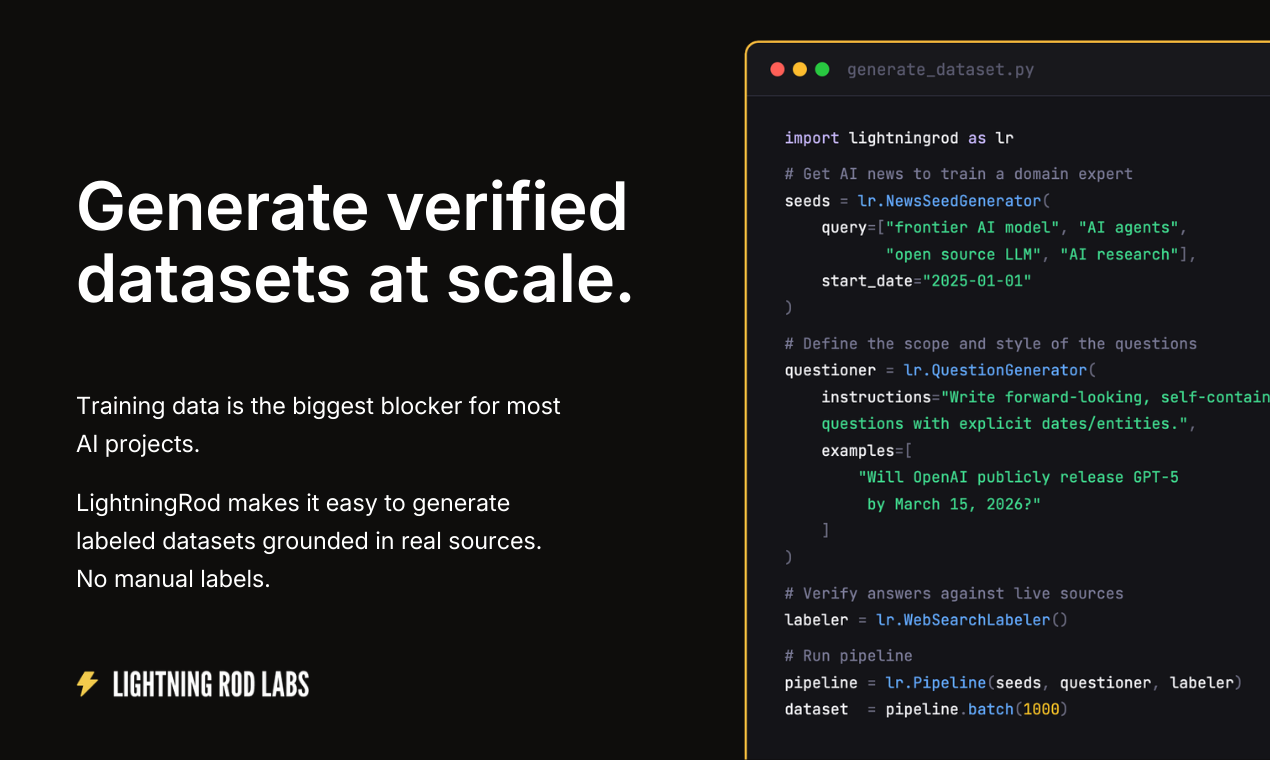

The core idea is straightforward. You point Lightning Rod’s SDK at real-world data sources, things like news articles, SEC filings, regulatory documents, or your own internal document corpus, and it uses verifiable real-world outcomes to generate labels rather than asking a human to assign them manually.

That last part is the genuinely interesting design decision. Instead of synthetic generation or crowdsourced annotation, Lightning Rod anchors its labels to what actually happened. The demo on their site shows a concrete example: a dataset question asking whether the Trump administration would impose 25% tariffs on Canadian goods by a specific date, sourced from a New York Times article, labeled “Yes” with a 0.92 confidence score, with the label sourced from Reuters. The timestamps are right there. The provenance is explicit. That’s a meaningful difference from a synthetic dataset where the “ground truth” is whatever a language model decided felt plausible.

It’s a Python SDK, so setup is minimal. The docs are live at docs.lightningrod.ai and the pitch is that you can get to a production-ready training set in hours rather than weeks.

The customer list on the homepage includes Shore Capital, AirHelp, Brunswick Group, Fabletics, and a few others. That’s a noticeably varied set, spanning finance, consumer, and PR. It suggests the use cases aren’t narrowly vertical, which is either a good sign about generalizability or a warning sign about focus, depending on your read.

It got solid traction when it launched, landing near the top of the daily rankings on Product Hunt.

I’d be curious how the verification layer handles domains where outcomes are ambiguous or delayed. Financial forecasting has relatively clean ground truth. Something like predicting regulatory sentiment or brand perception is messier. The SDK’s reliability probably varies a lot based on how legible the real-world outcome actually is. That’s not a criticism so much as the obvious next question.

For anyone who’s spent time thinking about how AI agents handle messy document pipelines, the upstream data quality problem Lightning Rod is targeting is the same one that tends to quietly degrade everything downstream.

The Verdict

I think this is a real problem with a real solution, which already puts it ahead of a lot of what I look at.

The anchoring-to-outcomes approach is the right instinct. Synthetic data is useful but it’s epistemically fragile in a way that real labeled outcomes aren’t. If Lightning Rod can consistently produce datasets with traceable provenance and accurate labels, that’s a legitimate edge over both the labeling services and the generate-and-hope crowd.

The risk at 30 days is adoption friction. Developers need to trust a data pipeline before they put it anywhere near a production model. The confidence scores and source citations in the demo UI are clearly trying to build that trust, but trust takes reps. At 60 days, the question is whether the enterprise customers already on the logo wall are actually using it in production or just piloting. At 90 days, I’d want to know if the SDK is holding up outside the clean use cases, specifically in domains where real-world outcomes are noisy or slow to resolve.

The developer tooling space is full of products that solve a real problem but stall because the buyer is also the skeptic. That tension shows up in a lot of AI tooling right now. Lightning Rod’s best path is probably a few loud public case studies with concrete accuracy numbers. Show the receipts. The tagline is good. The receipts close deals.