The Macro: Your Calls Are Someone Else’s Training Data

Here’s the thing nobody says out loud about Otter, Fireflies, and most of the cloud-transcription crowd: when you record a sales call or a board meeting through their apps, that audio goes somewhere. Usually to a server you don’t control, processed by models you didn’t choose, under terms of service that most people never read (there’s a whole product built around that problem, actually). That’s a reasonable tradeoff for a lot of users. It’s not a reasonable tradeoff for lawyers, therapists, founders talking about unannounced fundraises, or basically anyone in a regulated industry.

The AI meeting notes category has gotten genuinely crowded. Granola raised money and built a following. Otter has been around long enough that people forget it’s still growing. Notion and other all-in-ones have bolted on recording features. The pitch is always some version of: never take notes again, get a summary, search your history.

The privacy angle has been underplayed.

Most of these tools treat on-device processing as a marketing footnote if they mention it at all. The assumption is that users will accept cloud processing because the convenience wins. And for casual use, maybe it does. But there’s a real segment of professionals who would use AI meeting notes if they trusted where the data went, and that segment has been mostly ignored.

That’s the gap talat is pointing at. It’s not a new product category. It’s a deliberate constraint applied to an existing one. Whether the constraint is the whole story or just the opening line is what makes it interesting to watch, and tools like Doraverse show there’s appetite for meeting infrastructure that takes workflow seriously, not just transcription.

The Micro: A Mac App That Actually Stays on Your Mac

talat is a macOS app. That’s the whole ballgame architecturally. It runs on Apple Silicon and uses the Neural Engine for transcription and summarization, which means the compute happens locally and the audio never touches a remote server. The download is 20MB. It’s a one-time purchase with all future updates included, which is a pricing model that feels almost retro at this point and will probably be the detail that gets shared most in developer forums.

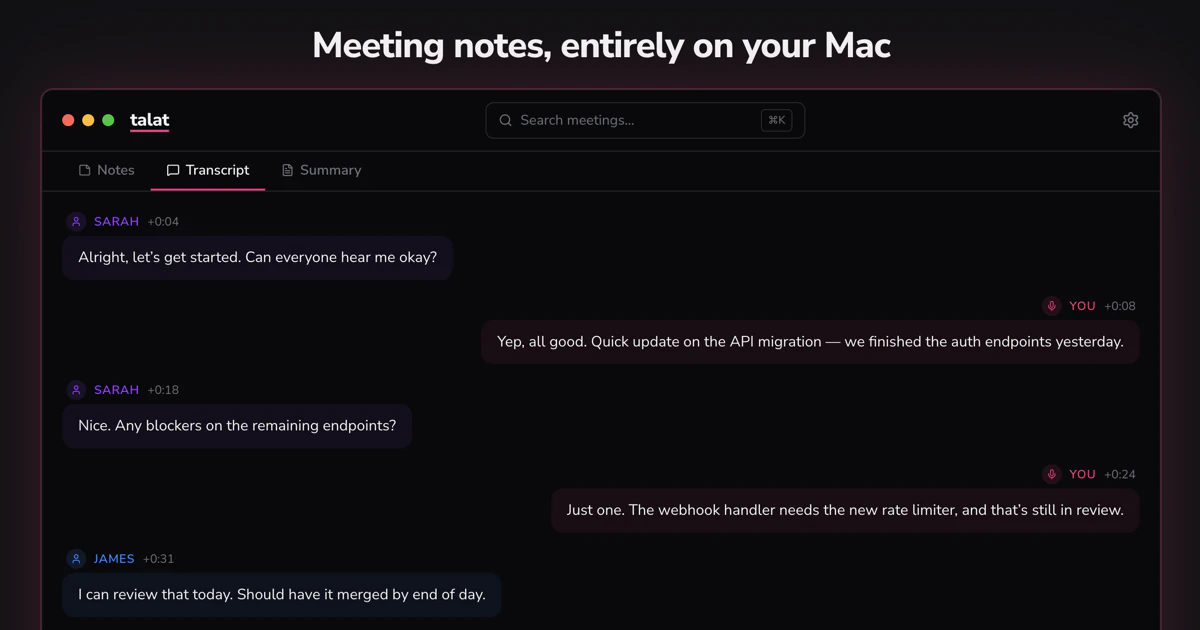

The core flow is simple. You join a meeting in Zoom, Teams, Google Meet, or whatever you already use. talat detects that a conferencing app has grabbed your microphone and starts recording quietly. You get a notification. That’s it. It captures both your mic and system audio, so it transcribes everyone, and it attempts speaker diarization, meaning it tries to label who said what. You can edit speaker assignments in real time, which is a small thing that matters a lot if you’ve ever cleaned up a transcript where the AI decided two people were the same person.

When the meeting ends, a local LLM generates a summary: key points, decisions, action items. Everything is searchable, stored locally, and exportable.

The power-user features are where it gets interesting. You can bring your own LLM provider, write custom summarization prompts, auto-export to Obsidian, push data out via webhooks, or query your meeting history through an MCP server. That last one is notable. MCP (Model Context Protocol) integration means you can wire talat’s notes into other AI tools as a structured data source, which turns it from a notes app into something closer to a local knowledge layer.

It got solid traction on launch day, which suggests the privacy angle resonates more than the incumbents are giving it credit for.

The team is also explicit that it runs alongside Granola or Otter. No forced switching. That’s a smart zero-friction onboarding move.

The Verdict

I think the on-device constraint is genuinely the right call for a specific kind of user, and that user exists in large enough numbers that this could work. The pricing model (one-time, updates included) is either going to build serious word-of-mouth or create a sustainability problem at 18 months. Probably worth watching.

What I’d want to know at 30 days: does the local transcription accuracy hold up on accented speech and cross-talk, which is where cloud models with massive training sets usually win. At 60 days: are the Obsidian and webhook integrations actually getting used, or are they checkbox features for a README. At 90 days: does the MCP integration pull in any traction from the developer-tools crowd, because that’s the distribution vector that could actually break through.

The risk is that privacy as a primary value proposition works well for the first wave of privacy-conscious early adopters and then stalls. The counter-argument is that enterprise compliance requirements are only getting stricter, and a tool that’s genuinely zero-egress has a real answer when IT asks the hard questions.

I’d try it. That 20MB download and one-time price is a very low commitment ask.