The Macro: The Cloud Bill That Comes for Everyone

Here’s a thing that happens constantly and nobody talks about enough. A team ships a cloud architecture, it works fine in staging, it survives the first few weeks, and then around month three some combination of traffic spikes and suboptimal service choices turns the AWS bill into a small mortgage payment. Postmortems get written. Someone updates a Confluence doc. The cycle repeats.

The infrastructure tooling space has gotten a lot of attention lately, mostly from the AI-assists-your-code angle. Gartner reportedly projects that by 2028, 90% of enterprise software engineers will be using AI code assistants, up from under 14% in early 2024. That’s a massive shift, and most of the energy has gone into the writing-code side of that shift. Tools like Unblocked, which does AI-assisted code review, and Tessl, Guy Podjarny’s post-Snyk bet on AI-native software, are all playing in adjacent territory.

But infrastructure design specifically, the decisions made before deployment about how your services talk to each other, what regions you run in, how your load balancers are configured, remains weirdly underserved by tooling that actually validates outcomes rather than just suggesting them.

There are existing players. Terraform and Pulumi handle infrastructure-as-code but they’re not telling you whether your architecture is good, they’re just codifying whatever you already decided. Various cloud-native cost tools (Infracost, CloudHealth) will tell you what something will probably cost, but that’s reactive analysis, not design validation. The emulation angle, where you actually simulate behavior before you deploy, that’s the gap InfrOS is specifically targeting.

It’s a technically ambitious bet. Whether the market is ready to pay for pre-deployment confidence is the real question.

The Micro: Emulation as a Feature, Not a Buzzword

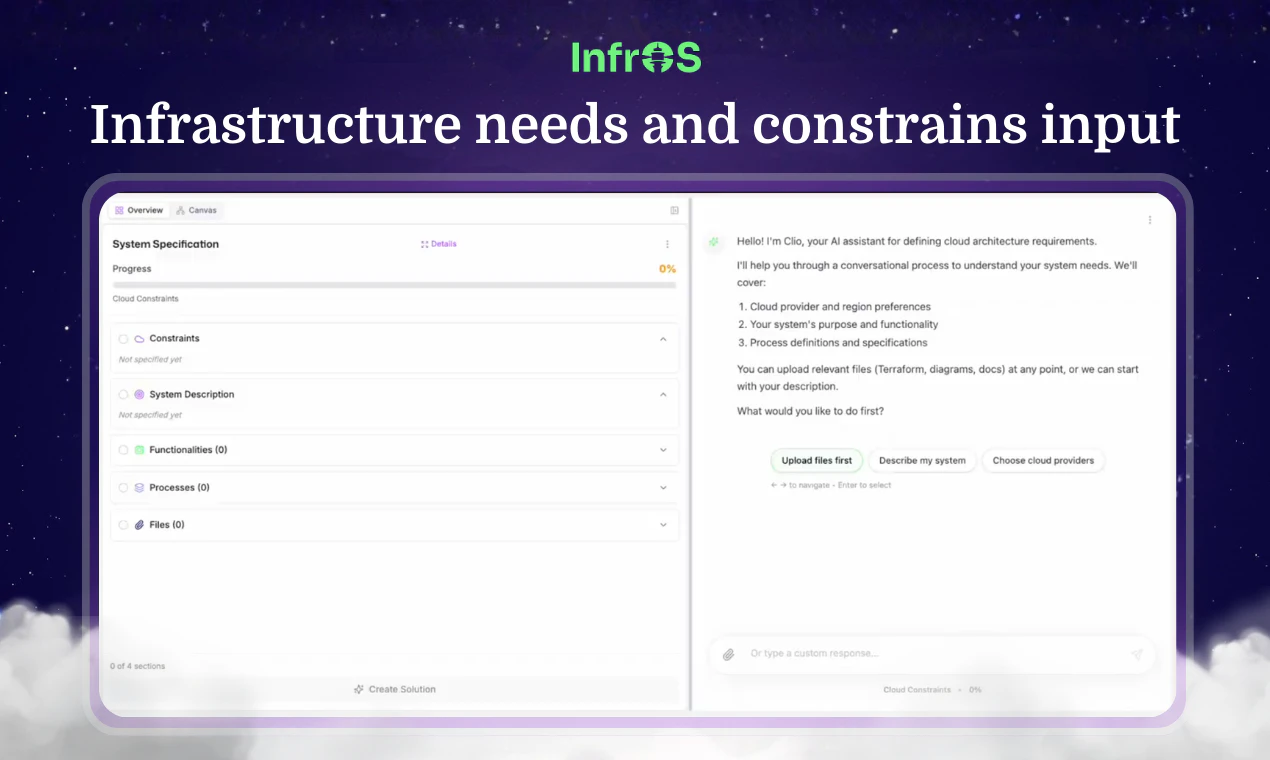

InfrOS is billing itself as a tool that designs and validates cloud architectures before you ship them, with the key differentiator being that it doesn’t just predict outcomes, it emulates them. That word choice matters. Prediction is a model giving you a probability. Emulation is running the actual behavior in a controlled environment and showing you what happens.

The product appears to work by taking your architecture inputs (your priorities, your constraints, presumably your current or planned cloud setup) and generating an optimized design that aligns to those priorities. Then it runs that design through emulation to validate it before anything touches production. The pitch on their site is pointed: “It doesn’t just predict outcomes, it proves them.”

That framing is doing a lot of work, and it should be tested carefully. Emulation fidelity is genuinely hard. Simulating cloud behavior at scale means making assumptions about traffic patterns, service behavior under load, failure modes. The value here is entirely contingent on how accurately the emulation reflects real-world conditions. If the emulation is too sanitized, you get false confidence, which might actually be worse than no confidence.

That said, the partners listed on their site include EY, Red Hat, and what appears to be some defense-adjacent organizations. That partner mix suggests they’re targeting teams with serious infrastructure requirements, not just indie devs trying to keep their Heroku bill down. (There’s a Reddit thread where someone actually recommends InfrOS for cost management in a micro-SaaS context, which is a different customer profile entirely. Could be interesting, could be scope creep.)

The product is also SOC 2 Type II certified, which is the kind of thing that matters a lot if you’re selling to enterprise and means very little if you’re not.

It got solid traction on launch day, which tracks given the specificity of the problem it’s solving.

The “evolve infrastructure with control over time” part of the pitch is underdeveloped in their current materials. I’d want to see what that actually looks like in the product.

The Verdict

I find the core idea genuinely compelling. Pre-deployment emulation for cloud architecture is the kind of thing where, once you’ve seen it work, you can’t really justify going back to guessing. The pain it’s solving is real and expensive and I’ve watched smart teams get burned by it repeatedly.

The thing that could kill it is fidelity drift. If the emulation doesn’t accurately model what real prod looks like under real conditions, the whole value proposition collapses and you’ve just added a step to the deployment process that makes everyone feel better while solving nothing. The tooling comparison to mTarsier’s approach to config management is useful here: the tools that stick are the ones where the abstraction actually holds under pressure.

At 30 days I’d want to know: what’s the gap between emulation results and actual post-deployment behavior for current customers? At 60 days: are the enterprise partners on the website actual paying customers or logo relationships? At 90 days: does the “evolve over time” angle have enough product behind it to be a retention hook, or is this a point solution that gets used once per deployment cycle?

CEO Naor Porat comes from cloud and hybrid strategy work according to his LinkedIn. The CPTO, Harel Dil, is out of Tel Aviv University. The team looks like it has the infrastructure depth to actually build what they’re describing.

I want to see the emulation work. If it does what it says, this is a real product.