The Macro: The AI Code Review Land Grab Is Already Crowded

AI developer tooling in 2026 is a weird place. The category is simultaneously overflowing and underdelivering. GitHub Copilot does code suggestions. CodeRabbit does PR reviews. Sourcegraph’s Cody does a lot of things. A dozen smaller players all claim they’ll make your pull requests less painful. The market data explains why everyone’s piling in. Software engineering as a category sits around $65–73 billion in 2024–2025 and is tracking toward $205 billion by 2035, per Market Research Future. There’s real money moving through this space.

The irony is that the engineering job market itself has contracted sharply. Engineering headcount is reportedly still about 22% smaller than it was in January 2022, according to The Pragmatic Engineer’s newsletter. That compression creates a specific kind of pressure: smaller teams doing more review work with less bandwidth. That’s exactly the gap AI code review tools are trying to fill. Whether that’s a genuine opportunity or just a pile of VC money chasing the same thesis depends on who you ask.

The actual product differentiation battle comes down to one question.

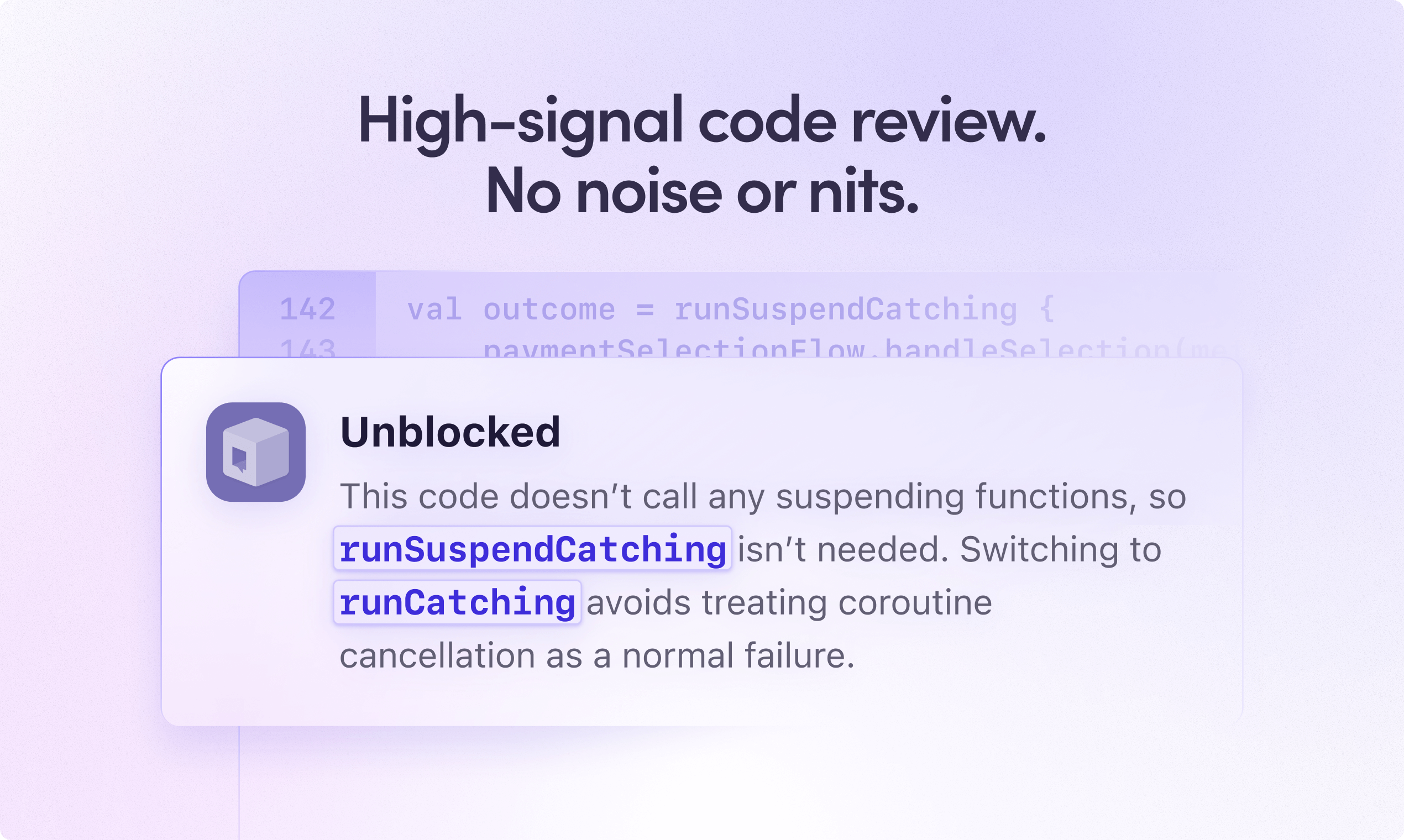

Does the AI understand your codebase, or is it just running a generic linter with a language model strapped on top? Most first-generation tools are closer to the latter. They see the diff, they leave comments, they occasionally flag something useful. The problem is that a diff stripped of context is a pretty thin slice of what a senior engineer would actually care about. Why was this pattern chosen? What did the team decide six months ago in that Slack thread nobody can find? What does this PR break that the tests don’t cover? That’s the gap the better tools are now competing to close, and it’s a legitimately hard problem.

The Micro: It Read Your Jira Tickets (No, Really)

Unblocked’s core technical claim is that it pulls context from across your actual working environment. Not just the repo, but Slack, Jira, PR history, docs. It uses all of that to inform what it flags. The practical implication is meaningful. Instead of a comment that says “this function is too long” (cool, thanks), you might get a comment that says “this conflicts with the pattern your team adopted in PR #847 based on the discussion in #eng-backend on March 12th,” with a citation. That’s a different artifact entirely.

The citation model matters specifically because AI review comments have a credibility problem.

They sound authoritative but you can’t verify them quickly. It’s the same issue as confident-but-wrong search results. Surfacing the source of a recommendation addresses that at the product level rather than just hoping users trust the output. If Unblocked actually links back to those sources consistently, that’s a real design decision, not a feature bullet.

On the launch: it got solid traction on launch day. The testimonials on the product site are from engineers at Clio, TravelPerk, Auditboard, HeyJobs, and Drata. Those are recognizable names in mid-market SaaS, which suggests actual enterprise traction rather than friendly beta users filling out a feedback form.

Dennis Pilarinos is listed as Founder and CEO on LinkedIn. Beyond that, I don’t have clean data on team background, funding, or user numbers, so I won’t fill in gaps with guesses. The 21-day free trial with no card required is a smart move for a product that needs engineers to experience it before they’ll fight for budget internally.

The Verdict

The architecture makes sense. The frustration it’s targeting is real. Every engineer has been burned by an AI reviewer that left twelve comments about variable naming and completely missed the race condition sitting two functions down. If Unblocked’s context-stitching actually works consistently, and that’s a meaningful if, it’s solving something that matters.

What will determine whether this succeeds at 30, 60, 90 days isn’t launch momentum.

It’s retention after the trial. The first PR review that surfaces a genuinely non-obvious issue with a real citation is a conversion event. The tenth one that does it is a sticky product. The failure mode is that context retrieval adds latency or noise of its own. Too much irrelevant history pulled in, confident-sounding wrong answers with better footnotes. That would be worse than a dumb linter.

A few things I’d want to know before fully endorsing it: how does review quality hold up on larger, messier repos compared to cleaner mid-sized codebases? What does the false-positive rate look like on the supposedly high-signal comments after a month of real use? And what does pricing look like past the trial, because that’s where enterprise PLG tools either convert or quietly get uninstalled.

I think this is probably worth trying if your team is drowning in PR volume and your current tooling is leaving obvious things on the table. I’d be more cautious recommending it to teams with massive, legacy-heavy repos where context retrieval could get noisy fast. Worth watching either way.

Also featured on HUGE: Guy Podjarny Built Snyk to Fix Insecure Code. Now He Wants to Fix the AI Writing It. · Ellie Lives in Slack So You Don’t Have to Live in Jira · PinMe Wants You to Deploy a Website Before You Even Remember to Make an Account