The Macro: The Dashboard Is Dead, Long Live the Chatbox

Engineering teams in 2026 are drowning in context that technically exists somewhere. GitHub has your PR status. Jira has your sprint. Linear has your cycle time. Sentry has your incident. PostHog has your funnel. None of them talk to each other in a way that helps the engineering manager who just got asked in standup why checkout conversion dropped 12% after Thursday’s deploy.

Not a new problem. But the tooling to actually solve it is newer than it looks.

The Slack angle matters here. Multiple sources put Slack’s 2025 revenue projection around $4.2 billion, and its parent Salesforce has been publicly positioning it as an “agentic OS” since Dreamforce 2025. The platform is clearly trying to be the layer where AI agents live, not just a place where you paste links to dashboards. That’s a real tailwind for anyone building Slack-native tooling right now, and it’s the kind of platform bet that turns a niche product into an obvious category.

The competitive field is crowded in spirit but weirdly thin in execution. Jira and Linear both have their own AI features. Linear’s are reasonably good. But they’re islands. They answer questions about themselves. The actual gap is cross-tool reasoning: what happened, across all your systems, when X shipped. Most tools punt on that. Atlassian Intelligence exists and is fine if your entire world is Atlassian, which it mostly isn’t. Zapier and Make can wire things together, but that’s automation, not conversation. The genuine “ask a question in Slack and get an answer that synthesizes GitHub, Jira, Sentry, and PostHog” space has a few players circling it. Nobody has obviously won it yet. That’s either an opportunity or a sign that it’s harder than it looks. Probably both.

The Micro: What Ellie Actually Does When You Ask

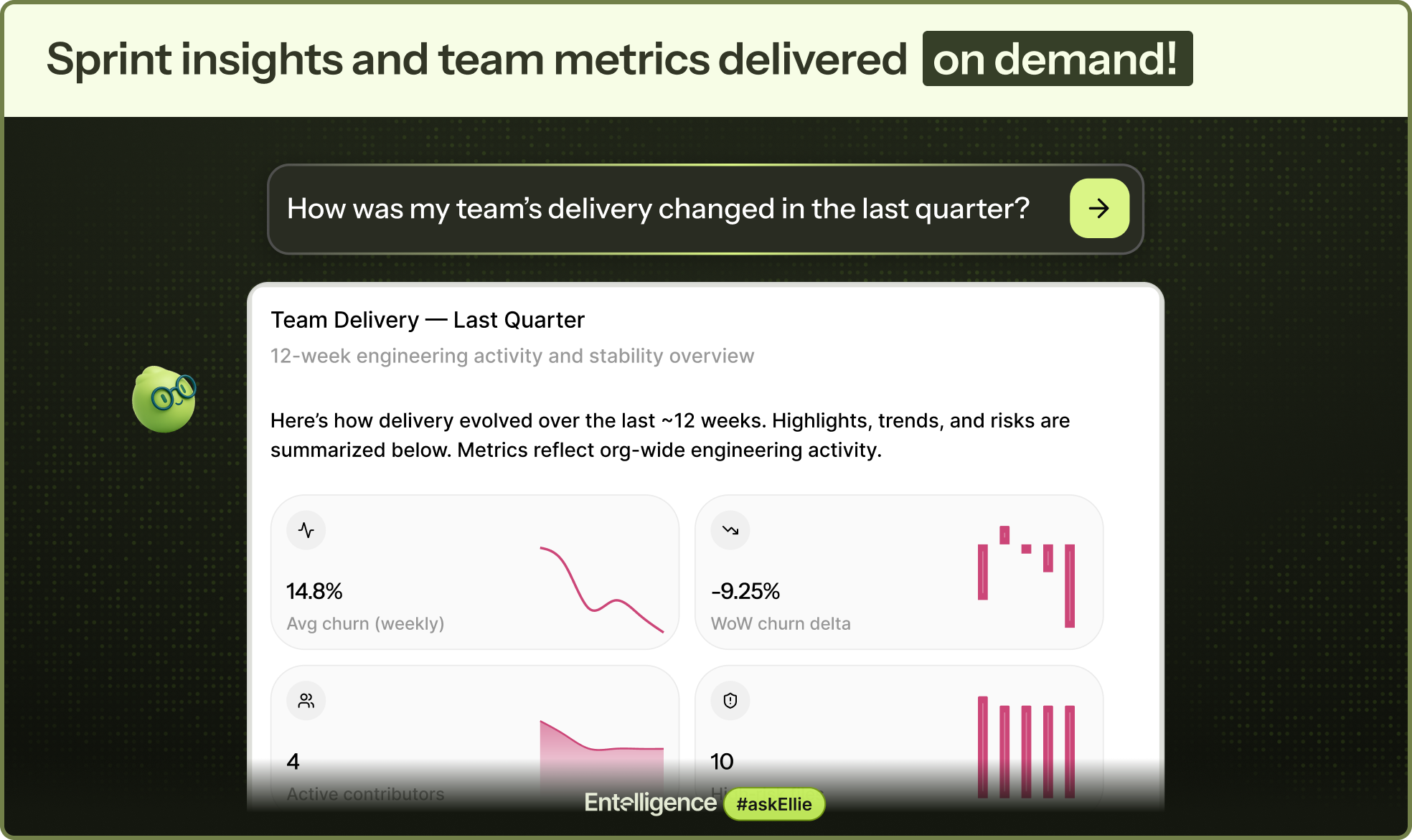

Ask Ellie, built by Aiswarya Sankar’s company Entelligence.AI, is a Slack-native AI agent that connects to GitHub, Jira, Linear, Sentry, PostHog, and a few others, and lets engineering teams ask questions in plain language. The ticket-creation angle is real but undersells it. Yes, you can turn a Slack thread into a GitHub issue or a Linear ticket. The more interesting capability is the query side. Who’s blocking what? Which teams have the worst cycle time? Are users affected after the last release? Ellie pulls from your actual connected data to answer those.

The technical bet is that retrieval across heterogeneous APIs, each with different data models, rate limits, and auth schemes, is solvable in a way that produces answers useful enough to act on.

That’s non-trivial. PostHog and GitHub don’t really want to be in the same query. Mapping a production incident in Sentry back to the PR that caused it, then surfacing the sprint context from Linear, requires real plumbing underneath the natural language layer. The product page hints at this with questions like “How much AI-generated code hit production?” and “What’s the ROI of our AI adoption?” Those aren’t simple API calls.

It got solid traction on launch day on Product Hunt. The comment count is the more interesting signal. Forty-eight comments means a lot of people had something to say, which usually means the product is touching a real nerve or a real pain point. What’s visible externally suggests the core use case is landing with engineering managers and team leads specifically. The people who spend the most time synthesizing context scattered across six different tools.

The Verdict

Ask Ellie is solving a real problem with a plausible approach, and the timing is genuinely good. Slack’s platform ambitions and the maturation of tool-calling in LLMs make this more viable in 2026 than it would have been in 2022. The question isn’t whether the problem is real. It’s whether the answers are good enough to replace the habit of just opening four tabs.

At 30 days, the signal to watch is retention among teams that connect more than two integrations. Single-integration users will churn. They’re not getting the cross-tool synthesis that’s the whole point. At 60 days, answer quality under adversarial conditions is what matters: incomplete data, ambiguous Slack threads, repos with messy commit hygiene. That’s where AI agents usually start falling apart. At 90 days, the question is whether engineering leaders are making actual decisions based on Ellie’s output or just using it as a slightly fancier search shortcut.

I’d want to know what happens when Ellie is confidently wrong about a production incident.

The trust bar for engineering context is high. You cannot hallucinate a sprint velocity and have anyone ignore it for long. If the team has good answers to that failure mode, this is worth watching closely. If they don’t, it becomes an expensive novelty that gets quietly disconnected after the first bad incident review.

Also featured on HUGE: AI Code Review That Actually Read the Room (and Your Slack History) · SPECTRE Wants to Be the Last Workflow Standing in the AI Coding Agent Wars · Guy Podjarny Built Snyk to Fix Insecure Code. Now He Wants to Fix the AI Writing It.