The Macro: Your Identity Is Already Being Monetized. Just Not By You.

Somewhere right now, a generative AI model is probably trained on your face. Or your voice. Or content you made and posted publicly under the reasonable assumption that a human would see it and move on. That assumption is now obsolete.

The privacy technology market is growing fast enough that analysts can’t agree on exactly how fast. Estimates for where it lands by the early 2030s range from around $12 billion to well over $31 billion, depending on which research firm you ask and what they’re counting. The variance is wide, but the direction is unanimous. Every major number points up.

What’s driving it isn’t just corporate compliance anxiety, though that’s real. It’s something more personal and harder to ignore: the gap between what people think happens to their digital identity and what actually does. AI models are trained on scraped public data. Likeness rights, historically a concern for celebrities and athletes, are suddenly relevant to anyone with a social media presence. The legal frameworks haven’t caught up. GDPR and the California Consumer Privacy Act created some scaffolding, but enforcement is patchy and filing a claim without a lawyer is genuinely confusing for most people.

The companies operating in this space come at it from different angles. Some focus on enterprise deepfake detection. Others handle watermarking or brand protection. Techshark lists WEIR AI alongside competitors in what it describes as AI-powered identity rights platforms, a category that barely had a name three years ago.

That’s the interesting moment here. This isn’t a crowded market with obvious winners. It’s a market that’s assembling itself in real time, and the early entrants are still figuring out which problem is actually the one people will pay to solve.

The Micro: Monitor, Claim, License. In That Order.

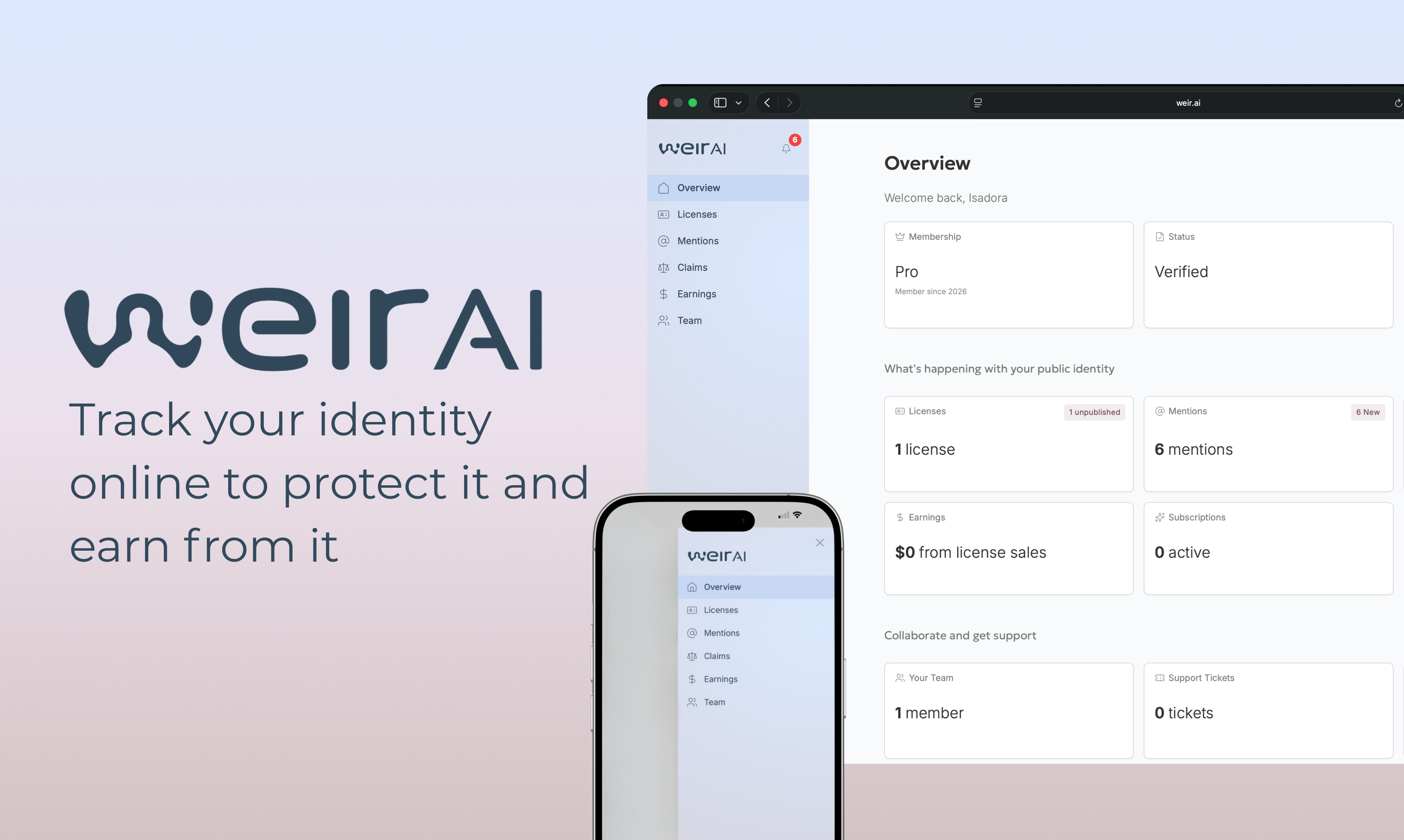

WEIR AI’s pitch is built around a three-part framing: find where you appear online, including places you didn’t put yourself, set the terms under which your identity can be used, and then either protect it or get paid when others use it. That third option is the one that caught my attention.

Most privacy tools stop at detection. They’ll tell you your data appeared in a breach or that your photo turned up somewhere it shouldn’t be. WEIR AI is trying to go a step further by adding a licensing layer. The idea, as one LinkedIn post describing the product put it, is that consent becomes machine-readable, not a PDF buried in a legal folder. That’s a genuinely interesting design ambition, if they can execute it.

The platform offers public identity checkups, monitoring for mentions including ones that aren’t surfaced through normal search, and a mechanism to file claims or license use on your own terms. The CEO, Gary McCoy, describes the company as focused on empowering individuals to control how AI interacts with their digital presence. His background is in product and strategy, with a stated focus on AI governance and ethics.

The product got solid traction on launch day, which tells you the problem resonates even if it doesn’t tell you whether the solution holds up under daily use.

The thing I keep turning over is the monetization piece. Teaching people that their identity has value they can capture is a real behavior change. It works for creators who already think in terms of licensing. It’s a harder sell to someone who just wants a photo of themselves removed from a website they’ve never heard of. Those are two different users with two different jobs to be done, and I’d want to know whether the product serves both or quietly prioritizes one.

The comparison point that comes to mind is how ElevenLabs approached voice identity as something to build products around rather than just regulate. WEIR AI is attempting a similar reframe, but for your whole digital self.

The Verdict

I think the problem WEIR AI is working on is real, urgent, and genuinely underserved. I also think they’re threading a needle that’s easy to drop.

The protection use case and the monetization use case are not the same user psychology. Someone panicking about a deepfake of themselves wants it gone, fast. Someone looking to license their likeness to an AI training dataset is running a small media business. Building one product that elegantly serves both without confusing either requires real product discipline.

At 30 days, I’d want to see how the claims process actually works. Is it automated? Does it require the user to do legal legwork? At 60 days, I’d want to know whether the monitoring catches what it says it catches, specifically on AI-generated content, not just indexed web pages. At 90 days, the question is whether anyone has successfully licensed their identity through the platform, and for how much.

The market context is favorable. The regulatory tailwind is real. And the AI identity rights space, as the overlap between AI tools and user trust continues to get complicated, is only going to get more contested.

WEIR AI is early and the platform is unproven at scale. But the bet they’re making, that individuals will want infrastructure for their identity the same way businesses want infrastructure for their data, is not a crazy one.