The Macro: AI Agents Are a Team Sport Now, Just Not Yet

The productivity software market is enormous and growing in ways that are genuinely hard to reason about. According to Precedence Research, it’s expected to hit around $264 billion by 2034, up from roughly $71 billion in 2024. Grand View Research puts the AI productivity tools slice specifically at about $8.8 billion in 2024, growing at 15.9% annually through 2033. Those numbers are real, but they obscure the more interesting shift happening underneath them.

What’s actually changing is the unit of deployment. For the past two years, AI tooling has been essentially a single-player experience. You get a license, you set up your environment, you figure out what works. The organizational layer barely exists.

OpenClaw, the open-source AI agent framework, has become a focal point for a certain kind of technical team. It’s powerful. It’s self-hostable. It’s also, in practice, a solo setup that requires meaningful engineering knowledge to configure and maintain. The gap between “one engineer who built this out” and “the whole team using it” is large and mostly unaddressed.

That gap is the market CoChat is playing in. It’s not alone. Superset is going after the agent orchestration problem from the developer tooling side, and the broader category of AI-native team infrastructure is getting crowded fast. The interesting question is who owns the access and security layer. Because right now, almost nobody does it well.

Most teams that are “using AI agents” are really just using one person’s Claude account forwarded into Slack. The infrastructure story is still getting written.

The Micro: One Thread, Every Agent, No SSH Required

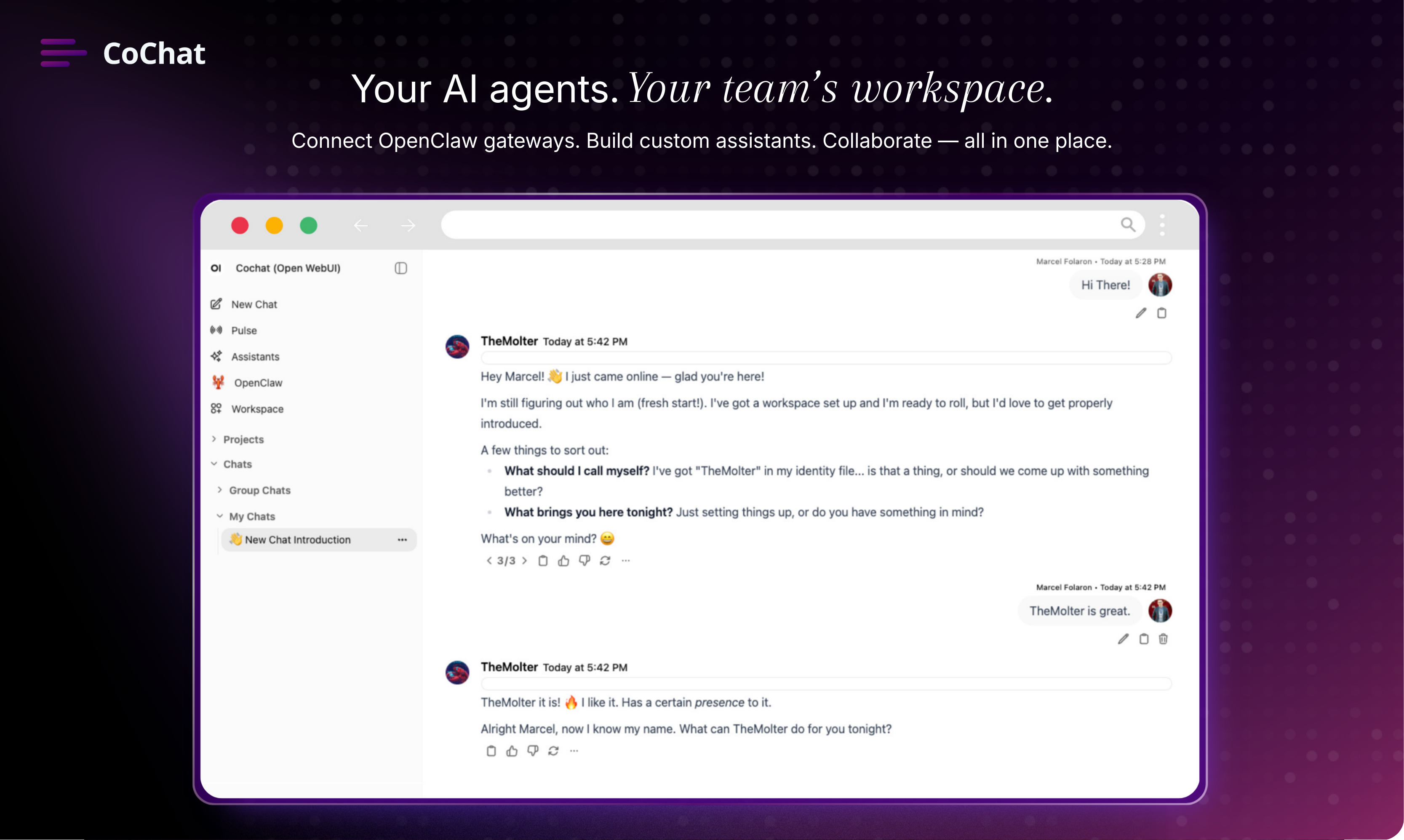

CoChat’s core product is a shared interface that connects to your existing OpenClaw or KiloClaw instance and makes it accessible to your whole team without touching the underlying setup. You connect your instance once, through a CoChat-managed or self-hosted gateway, and everyone on your team gets access to the same agents, tools, and memory. No one needs terminal access. No one needs to know the instance is running on a Mac Mini under someone’s desk.

That’s the pitch, and it’s a specific one.

The collaborative thread model is the part I find most interesting. Rather than everyone running separate agent sessions and stitching together outputs afterward, CoChat puts humans and agents in the same thread. You can bring in a specific agent mid-conversation, hand off a task, loop in a colleague, and produce something together. The design logic here is that the bottleneck isn’t individual AI output quality. It’s coordination.

There’s also a security audit layer built in. CoChat scans connected instances for misconfigurations, known CVEs, and unsafe skill setups automatically. When it finds something, according to the product website, it tells you what’s wrong and offers an actionable fix rather than producing a report no one reads. That’s a small but real product decision. Security tooling that generates findings without remediation paths gets ignored.

Agents can carry persistent memory, personality settings, and scheduled tasks. The idea being that these aren’t just query-response tools but something closer to standing team members with defined roles.

Marcel Folaron, listed as founding engineer, is also a co-founder of Leantime, a project management tool, according to his LinkedIn profile. That background in team workflow software shows in the product thinking. Joseph Roberts is listed as a founder at CoChat AI.

It got solid traction on launch day, which tracks. The problem it’s solving is real and well-framed.

For comparison, Viktor is trying to fill a similar coordination gap at the AI-plus-human workflow level, though from a different angle. The space is getting populated.

The Verdict

CoChat is solving a real problem with a clear head about what that problem actually is. Most AI agent tooling right now is built for the person who set it up. CoChat is built for the second person, and the third, and the manager who doesn’t know what SSH stands for. That focus is sharp and the product reflects it.

What I’d want to know at 30 days: does the shared thread model actually change how teams work, or does it just replicate the solo experience in a shared window? The coordination thesis only holds if the product creates genuine back-and-forth between humans and agents, not just parallel outputs in the same UI.

At 60 days: how does the security audit feature perform against real-world configurations? The promise is actionable remediation, not just findings. That’s a harder product to build than it sounds.

At 90 days: retention. Teams that adopt AI agent infrastructure tend to have one of two outcomes. Either it becomes load-bearing and sticks hard, or it gets abandoned when the initial setup enthusiasm fades. CoChat’s bet is that making it accessible to the whole team raises the adoption floor. That’s a reasonable bet. Whether the collaborative thread design is differentiated enough to hold attention against whatever tools getting built directly into developer workflows show up in the next quarter is the open question.

I’d watch this one carefully. The problem framing alone puts it ahead of most things in this category.