The Macro: AI Fashion Apps Keep Solving the Wrong Onboarding Problem

Here’s the thing about wardrobe apps. They all eventually ask you to do homework. Log your items. Tag your categories. Upload your flats. It’s a thirty-minute chore disguised as a product, and most people bounce before they ever get to the part where the app is actually useful.

The AI styling space has been cycling through this tension for a couple of years now. Plenty of apps have tried to be your personal stylist, and the smarter ones figured out that vision models could do item recognition from photos. But the input method kept being the bottleneck. You still had to deliberately photograph each piece. You had to want it enough to do the work.

Most people don’t.

That’s not a dig at the users, that’s just product reality. The activation rate for any app that requires significant pre-work before showing value is going to be rough, and fashion apps are a particularly brutal version of this because the competition is literally just opening your closet and looking at it.

What’s interesting right now is that vision models have gotten good enough, and fast enough, that the “photograph everything” step is starting to feel genuinely unnecessary. The question was always whether someone would redirect the camera from closet shelves to the user’s existing photo library. Selfies are, functionally, already a fashion archive. Most people have hundreds of them. The signal is right there.

The broader iOS app market is healthy enough that a focused utility with strong retention mechanics can build real traction. Apple’s services numbers have been consistently strong, and the App Store continues to be a viable distribution path for consumer AI utilities. The opportunity window for AI-native personal apps is real, even if the space is getting crowded fast.

The companies that are going to win here are the ones that eliminate friction at the very first step. That’s a product insight, not a features list.

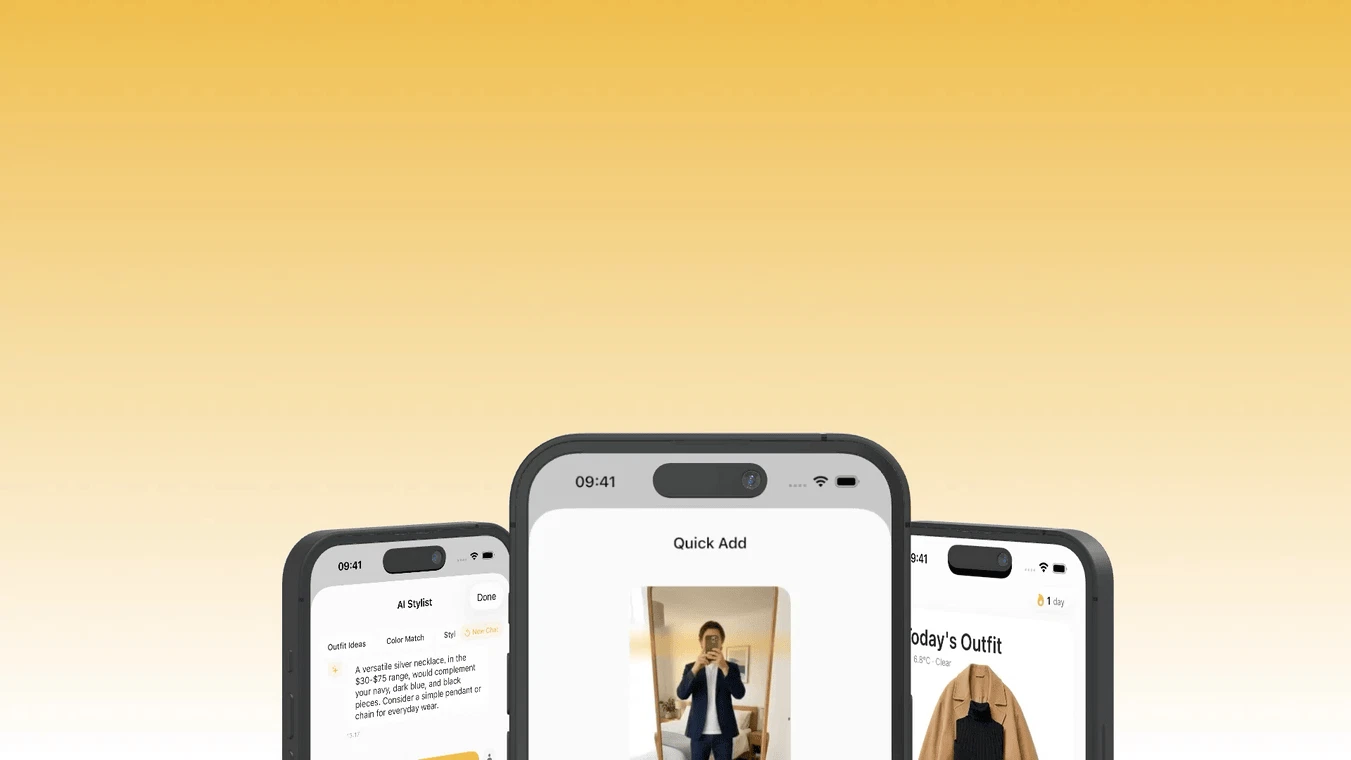

The Micro: Selfies In, Outfits Out, and a Widget You’ll Actually Check

Layered’s core bet is simple: instead of asking you to catalog your wardrobe, it reads your existing selfies and builds the closet model from those. You already took the photos. The app just processes them.

From there, the feature set is actually pretty complete. You get daily outfit suggestions from clothes you already own. Drop in a photo of a new dress and it generates a lookbook from it, Pinterest-style, which is a smart framing because that’s already the mental model most people use when they’re thinking about how to style something new. There’s a travel capsule feature that factors in your destination, the weather, and your luggage size, which is a genuinely useful constraint-based problem that I’d want help with.

The utility layer goes further than just outfit picks, too. Cost-per-wear tracking, identifying clothes you never actually reach for, wardrobe cleanup planning. These are features that make the app sticky beyond the novelty phase, which is where a lot of AI consumer apps die.

The home screen widget delivering tomorrow’s outfit is a nice touch. It’s the kind of ambient utility that turns an app from something you open occasionally into something that’s just part of your day.

The riskiest bet here is selfie parsing accuracy. The whole value proposition collapses if the app misreads what you’re wearing, or if it only works well when you’re in good lighting against a clean background. Real selfies are chaotic. I’d want to know how gracefully it handles the messy cases.

It did solid numbers when it launched, which suggests the framing resonated with people who’ve tried the “photograph everything” competitors and found them exhausting.

The smartest decision they made is positioning the input method as the differentiator. That’s the right thing to lead with.

The Verdict: Clever Enough to Win, If the Vision Model Actually Holds Up

I think Layered has a real shot, but for a specific reason that isn’t obvious from the marketing.

The fashion app category has failed repeatedly not because people don’t want styling help, but because the onboarding cost was always higher than the perceived value. Layered fixes the onboarding cost. That’s not a small thing. That’s the core reason this category has struggled, and addressing it directly is a meaningful move.

What I’m less sure about is accuracy. The entire product is built on the assumption that selfie parsing is good enough to construct a reliable wardrobe inventory. If it misidentifies colors, misses items, or struggles with layered outfits (the irony is right there in the name), then the downstream recommendations fall apart and you’re back to manually correcting a database, which is exactly the problem they’re trying to solve.

The feature that will determine whether this app has a two-year lifespan is the selfie parsing quality. Not the lookbooks, not the travel capsules. If the closet model it builds from your photos is actually accurate, the rest of the features work. If it’s shaky, no amount of downstream functionality saves it.

I’ve watched enough AI consumer apps, including some we’ve covered here like the AI companion space and tools rethinking creative workflows from first principles, to know that the vision model quality question is always the one that matters most in year one.

My prediction: if the parsing is solid, Layered builds a genuinely loyal user base among people who’ve bounced from every other wardrobe app. If it’s inconsistent, it becomes another interesting idea that didn’t survive contact with real-world photo libraries.