The Macro: On-Call Is Broken and Everyone Knows It

Site reliability engineering is one of those disciplines that sounds prestigious until you learn what it actually involves at 2 a.m. Someone’s service is down, the traces are noisy, the logs are spread across four tools, and one exhausted engineer is manually correlating all of it while Slack fills up with stakeholders asking for updates. The job has always been part detective work, part firefighting, and almost entirely reactive.

The infrastructure monitoring space has been trying to solve this for years. Datadog, Dynatrace, and New Relic built empires on giving engineers better visibility. Grafana and Prometheus gave teams open-source alternatives. What all of them share is an assumption: a human is still in the loop, reading the dashboard, interpreting the signals, deciding what to do. The tooling got better. The fundamental loop stayed the same.

Kubernetes made this harder, not easier. The abstraction is powerful but the operational surface area is enormous. Pods, deployments, services, ingress, namespaces, node pressure. A regression introduced in one deployment can ripple in ways that aren’t obvious without correlating metrics, traces, and logs across multiple components simultaneously. Most teams can’t hire enough SREs to do that well. The ones who can afford senior SRE talent are spending it on toil.

The pitch for AI-assisted operations has been floating around for a while. But most implementations I’ve seen are still fundamentally alert summarizers. They take your noisy PagerDuty feed and write a slightly more coherent sentence about it. That’s not nothing, but it’s also not a fix. The interesting question is whether AI can move further down the chain, past detection, past summarization, into actual diagnosis and resolution. That’s where Metoro is trying to plant its flag.

The Micro: eBPF at the Kernel, a Pull Request at the End

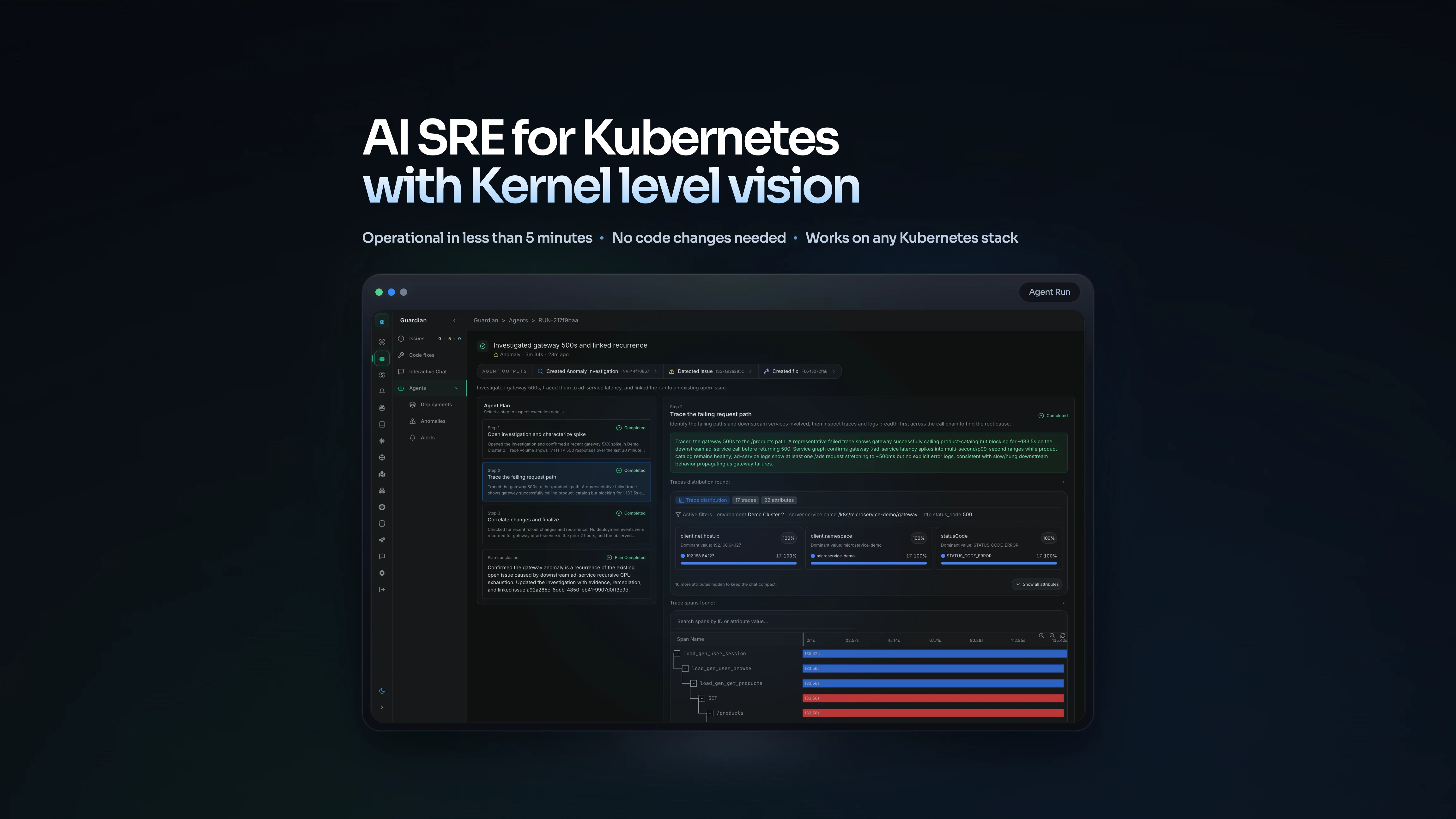

Metoro’s core proposition is aggressive: fully autonomous SRE for Kubernetes environments. Not a smarter dashboard. Not a better alert. The product monitors your cluster, detects an incident, identifies the root cause, and opens a pull request with a proposed fix. You receive a notification. The work is already done.

The technical foundation here is genuinely interesting. Metoro uses eBPF, a kernel-level technology that lets you observe system behavior without instrumenting your application code. That’s a meaningful architectural choice. Most observability tools require you to change something: add a library, deploy an agent, modify your configuration. Metoro claims you install one Helm chart and you’re running in under a minute with no code changes required. For teams that have ever tried to retrofit observability onto a production system, that claim will land with some weight.

eBPF-based telemetry means Metoro brings its own data collection layer. It’s not dependent on whatever you’ve already configured. It sees network traffic, system calls, and process behavior at the kernel level, which gives it a richer picture than application-level instrumentation alone. Whether the AI reasoning layer on top of that data actually produces accurate root causes consistently is the harder question, and one I can’t answer from the outside.

The deployment verification feature is worth understanding separately. Metoro watches every rollout and compares post-deployment behavior against production baselines. If something regressed, it flags what changed and what to do. This is a more defensible use case than fully autonomous incident remediation, because the blast radius of a bad deployment verification call is smaller than a bad auto-fix on a live production incident.

It got solid traction on launch day, which suggests the framing is resonating with people who spend real time in Kubernetes environments. The audience for this product knows exactly what problem it’s describing.

The AI agents doing autonomous operational work pattern is one I’ve been watching across categories. Rocketlane’s approach to running services backoffice autonomously and Pollen’s account monitoring work are both betting on a similar thesis: that the right scope for AI autonomy is the repetitive, high-context, time-sensitive work humans do badly at scale.

The Verdict

Metoro is making a serious technical bet in a real problem space. The eBPF foundation is smart. The zero-instrumentation setup removes a genuine barrier to adoption. And the pull-request-as-output framing is clever because it keeps a human nominally in the loop while still automating the hard part.

My honest concern is the quality and reliability of the AI reasoning layer. Root cause analysis in distributed Kubernetes systems is hard. Not hard as in tedious. Hard as in genuinely ambiguous, context-dependent, and consequential if you get it wrong. An AI that opens a pull request with the wrong fix, and does it confidently, could make incidents worse. I’d want to know what the false positive rate looks like on root cause attribution, and whether the auto-fix feature has any guardrails around high-risk changes.

The 30-day question is whether teams trust the autonomous fix flow enough to actually use it, or whether they treat it as a slightly better observability tool. The 90-day question is retention after they’ve seen a few incidents handled well or badly.

This is a product where the difference between impressive demo and production-worthy tool is entirely about accuracy under real conditions. I’m interested enough to watch it closely. I’m not ready to route my 2 a.m. pages through it yet.